Schwister Documentation

Here’s documentation of our new game Schwister!

Schwister is a team-based multiplayer competitive light-up table top arcade game that combines the spirit of Twister with the frenzy of Whack-A-Mole! Tiles randomly shuffle with each play, and two teams, red and blue, compete to turn off all their tiles before the other team. It works great in jovial environments where participants are passing by, drinking, open to being a little silly hitting cool light-up pressure sensitive LED tiles on a 4′ x 4′ table top!

The game is currently an advanced prototype, but we’ve strut our stuff at Camp Wavelength 2017 and Ubisoft Night Circus! Want it for your event? Schwister is both modular and transportable so reach out to us 🙂

We would like to thank our Technical Consultant Christopher Thomas, and RJ Bethune for scoring the documentation of our project.

Scream Whistle 2017

SCREAM WHISTLE

October 27 & October 28, 2017 – 9:00PM

Hopkins Duffield and Daeve Fellows are designing the main stage for Steam Whistle’s 2017 Halloween Party! See you there!

Camp Wavelength 2017

Wavelength Night Camp 2017

August 19 & August 20, 2017 – 7:30 PM

We have a wicked new project for Camp Wavelength 2017 called Schwister! Schwister is a team-based multiplayer competitive light-up tabletop arcade game that combines the spirit of Twister with the frenzy of Whack-A-Mole.

Wavelength Music is a curated concert series designed to champion creativity, co-operation and collaboration in the independent music and arts scenes. Established in 2000, Wavelength Music is a non-profit arts organization that puts artists and the community first. A cornerstone of the Toronto music scene, they have championed literally thousands of emerging artists during its decade-plus run. Due to the flooding on Toronto Island, for this year only, Camp Wavelength 2017 becomes DAY CAMP IN THE CITY!

AUGUST 19 NIGHT CAMP LINEUP:

Dilly Dally

Rich Aucoin

Duchess Says

Bedroomer DJs: Internet Daughter + Eytan Tobin + Kare + DJ New Jewel Movement & Friends

AUGUST 20 NIGHT CAMP LINEUP:

Deerhoof

The Luyas

Emilie & Ogden

See you there!

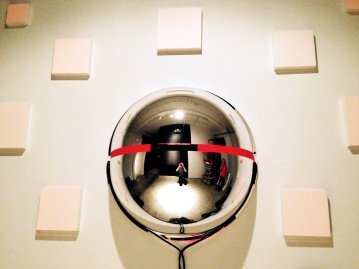

Documentation of Panoptidrome

We finally recovered some documentation after Kyle’s laptop got stolen! Here is documentation of Panoptidrome! If you’ve been following our work, you may notice that since then, some of the components of this project have been reincorporated / remixed into new projects! 😉

Videodrome 2015 at the Museum of Contemporary Canadian Art was a blast! We are grateful to have been involved with such a talented and supportive community for many years.

Panoptidrome Documentation from Hopkins Duffield on Vimeo.

For the show, we created an audio-video surveillance sculpture named Panoptidrome, which tracked the faces of people in the space and created a 3-D scan of them. The scan was then videomapped onto square tiles on the wall. This was the first time we used a Kinect V2, and the first time Kyle had used Windows in over 10 years! The Kinect V2 was hooked up to Max 7 and used Dale Phurrough‘s dp.kinect2 external object.

We would like to thank Dann Hines, John Scarpino, and Vanessa Shaver for their help during the development of this project. Also, thanks to Geoff Bland for some shots of the installation at the event!

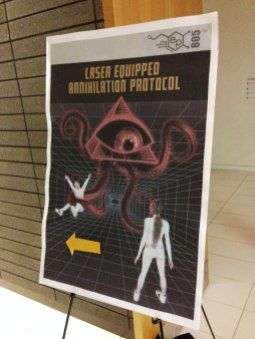

Laser Equipped Annihilation Protocol Updates

Now that it’s 2017, we thought that we’d do a recap of Laser Equipped Annihilation Protocol’s progress in 2016!

To kick off, HopDuff was featured on Discovery Channel’s Daily Planet for their Future Tech segment on January 28 which you can watch here!

Next, we were able to develop the project further with the help of the Toronto Arts Council Media Arts Grant! New friends were met, new high scores were created, and L.E.A.P. got a whole lot nastier with our Verbal Abuse Training Camp sessions! Below is a video of some of our play testing!

As an awesome and epic finale to the year, we lasered-up Ubisoft‘s Holiday Party, which was a blast! We’ve never seen the project on such a large presentation scale before! Low quality photos taken by ourselves and Isaac Rayment, awesome quality ones taken by Jordan Probst!

ENDLESS CITY / FORMS FESTIVAL

Hopkins Duffield are proud to announce our involvement in three events at the Forms Toronto 2016! We’re doing one talk, one panel, PLUS will be lighting up the bar at The Great Hall with our sound reactive LED tiles!

ARTIST TALK: TOOLS AND PROCESSES FOR INTERACTIVITY

September 28 – 2:00 PM

Description:

Toronto-based collaborative duo Hopkins Duffield work in audio, video, installation, electronics, gaming, and performance disciplines. Drawing from experiences collaborating within the Toronto arts, education, gaming, and maker communities, they explore ways of combining both new and familiar mediums to create technologically altered interactive intermedia projects. During this talk Hopkins and Duffield will describe their approach to digital making and designing sensor-based objects that react to presence and touch.

FORMS1 OPENING NIGHT

September 28 – 8:00PM

Description:

Come back after our talk and check out Forms Festival’s opening night Forms1! Our sound reactive LED tiles will lighting up the Absolut Vodka bar at the Great Hall alongside the work and performances of NONOTAK, SUSY.TECHNOLOGY, ANIMA, MWM, BAMBII, LYDIAN, SJAMSOEDIN + VS.VS and more!

SUMMIT PANEL TALK: THE SCIENCE OF CREATING

September 29, 2016 – 6:00 PM

Description:

The aim of this talk is to explore the applied practices arrived at through the convergence of technology and creativity. Here, we have convened a fantastic and diverse panel from an array of disciplines spanning interaction design, digital media, advertising, food and architecture who will meet to examine this junction of science and creativity and discuss how this confluence is quickly blurring traditional divisions between disciplines and informing new concepts in creating, modelling and making.

Process of LED Tile Creation

Here are some pics of our process for assembling the Sound Reactive LED tiles we developed at Electric Perfume. This was our first time using LED strips, our first time powering something this large, and our first time using Processing in an exhibition! Additionally, we had the added challenge of trying to create a design that was water resistant, as it was placed on a bar-top where liquids are likely to be spilled. As always, there are things we would love to improve with more time, however, the project was a success!

We created twenty-four tiles for this project. Aside from some of the hardware, to create the guts we used:

- Arduino Mega 2560 so the tiles can also be used as switches if pressed

- Two Fade Candys to drive the LED’s.

- Twenty Four Meters of low-density (30 per metre) addressable RGB LED’s (WS2812B)

- An ATX power supply which could output up to 50 amps of current

- Processing with the Fade Candy and Arduino library to control all the logic behind the tiles

Once again thanks to our amazing team of help consisting of dAeve Fellows, Pete OHearn, Kayla Free, John Scarpino and our Technical Consultant Christopher Thomas! Also, thanks to Absolut, BOOM Marketing and Young Lions Music Club for inviting us to participate in making something happen.

Documentation of HopDuff @ Absolut Nights Toronto

Absolut Nights Toronto was a fantastic night! We had our Sound Reactive LED tiles and projections pumping to the tunes with good people, good vibes, and great drinks!

Here is some of our documentation of our LED tiles with more to come! We’ll also be posting documentation of our process so stay tuned! We want to give a mega thanks to Absolut Vodka, BOOM Marketing, Young Lions Music Club, and 2nd Floor Events. This project would not have happened if it wasn’t for our awesome team who helped us make this project happen, our Technical Consultant Christopher Thomas, Daeve Fellows, Pete OHearn, Kayla Free, John Scarpino, Mike Duffield . Also, want to toss a thanks out to Alex Leitch for being our LED hookup, and the always supportive Creatron Inc.

Absolut Nights Process Shots and Interviews

We’ve been workin’ away at creating our LED tiles for Absolut Nights Toronto on April 22, 2016. Here are some pictures of us hacking at it! Photos taken by the super talented Supermaniak.

For details about the event check out the Facebook Page here!

Absolut Nights Toronto

Hopkins Duffield is lighting up Absolut Nights!

From Facebook Event:

In a city of trendsetters where fashion, art and culture are the norm, some artists go above the ordinary in the name of creativity.

To celebrate the bold, the unconventional, the designers, tinkerers and creators, we’re collaborating with visionary Toronto Makers to transform the night.

With a striking display of lights and projection mapping, the collaborative duo Hopkins Duffield will light up the night at 2nd Floor Events on April 22. From illuminating touch-sensitive tiles to a holograph-inspired display that reacts to music and sounds, Daniele Hopkins and Kyle Duffield will hack the bar with multisensory installations to transform your cocktail experience.

We’re ready to see how a powerhouse duo makes the night at Absolut Nights Toronto. Are you?

Tear up the dance floor with music by Falcons, Promnite, Internet Daughter and AV, then refresh and re-energize with a classic Mind Eraser cocktail to keep the party going.

We’re ready to see how a powerhouse duo make the night at Absolut Nights Toronto. Are you?